Publications

Selected first-author publications are highlighted on the homepage. This page includes those papers together with additional collaborative work across LLM serving, sparse attention, and video generation.

NeurIPS 2025 Spotlight

Adaptive Sparsity

Long Context

Twilight

Adaptive attention sparsity with hierarchical top-p pruning.

ICML 2025

Semantic Sparsity

Sparse Attention

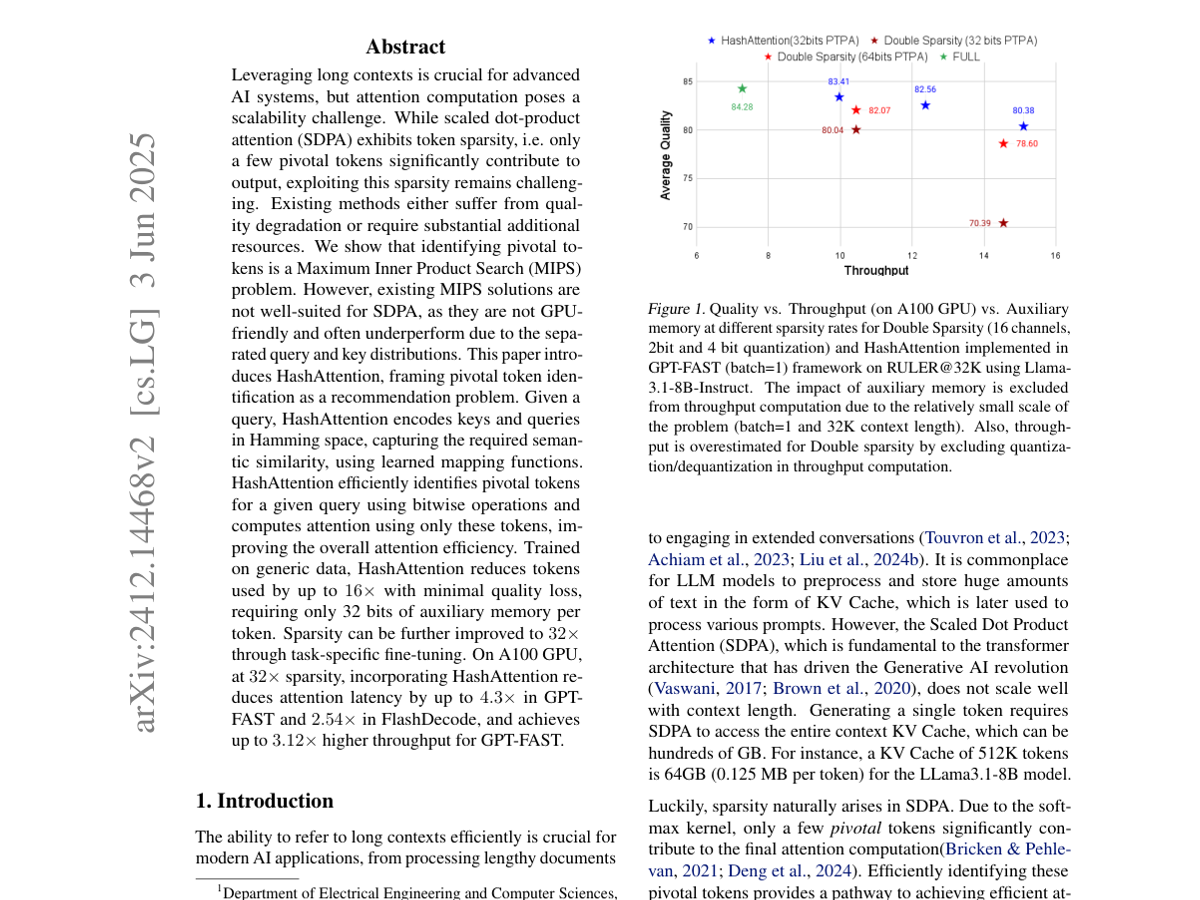

HashAttention

Semantic sparsity for faster inference.

Sparse Attention

KV Cache

LLM Inference

Post-Training Sparse Attention with Double Sparsity

Sparse attention for reducing KV-cache bandwidth in LLM inference.

MLSys 2024

LoRA Serving

CUDA Kernels

S-LoRA

Serving thousands of concurrent LoRA adapters.

Data Quality

Benchmark Contamination

Evaluation

Rethinking Benchmark and Contamination for Language Models with Rephrased Samples

Decontamination and benchmark overlap analysis for language models.