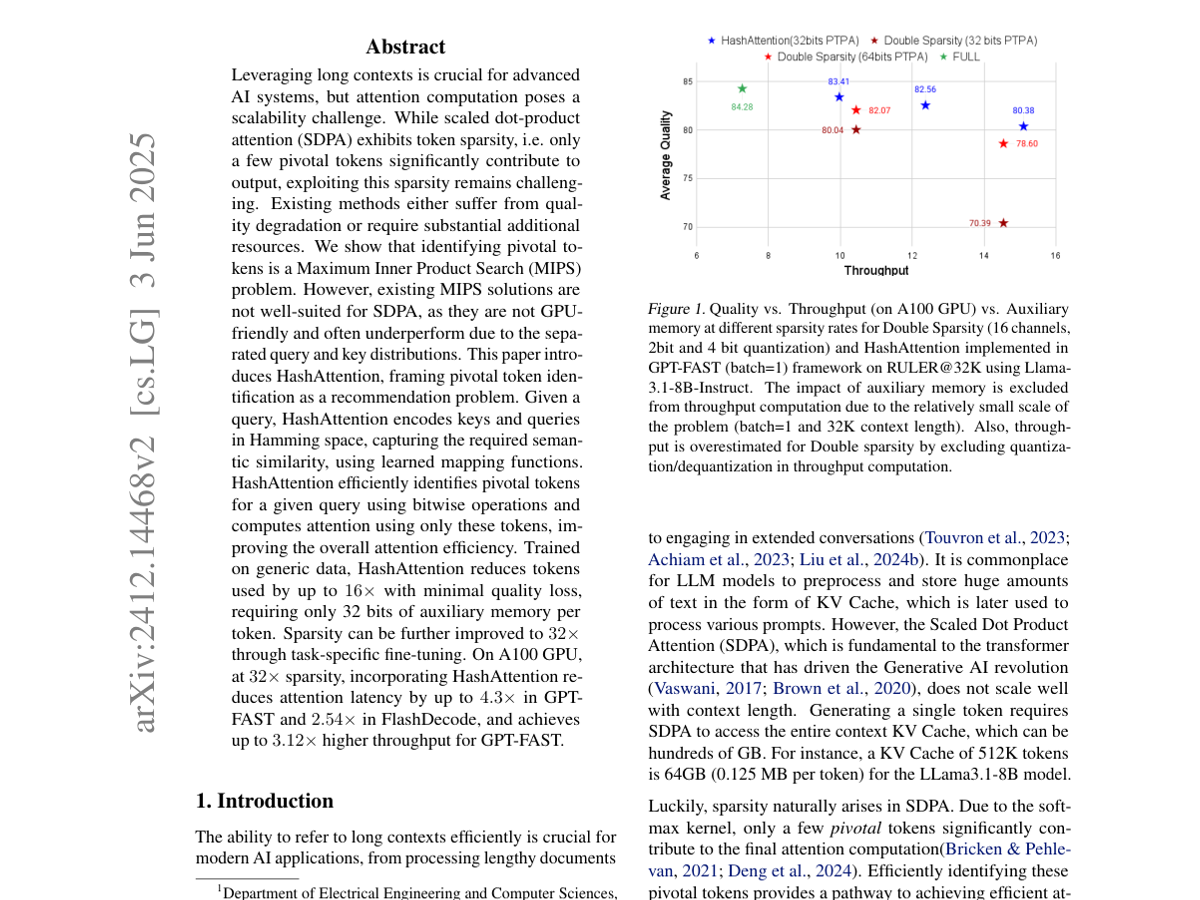

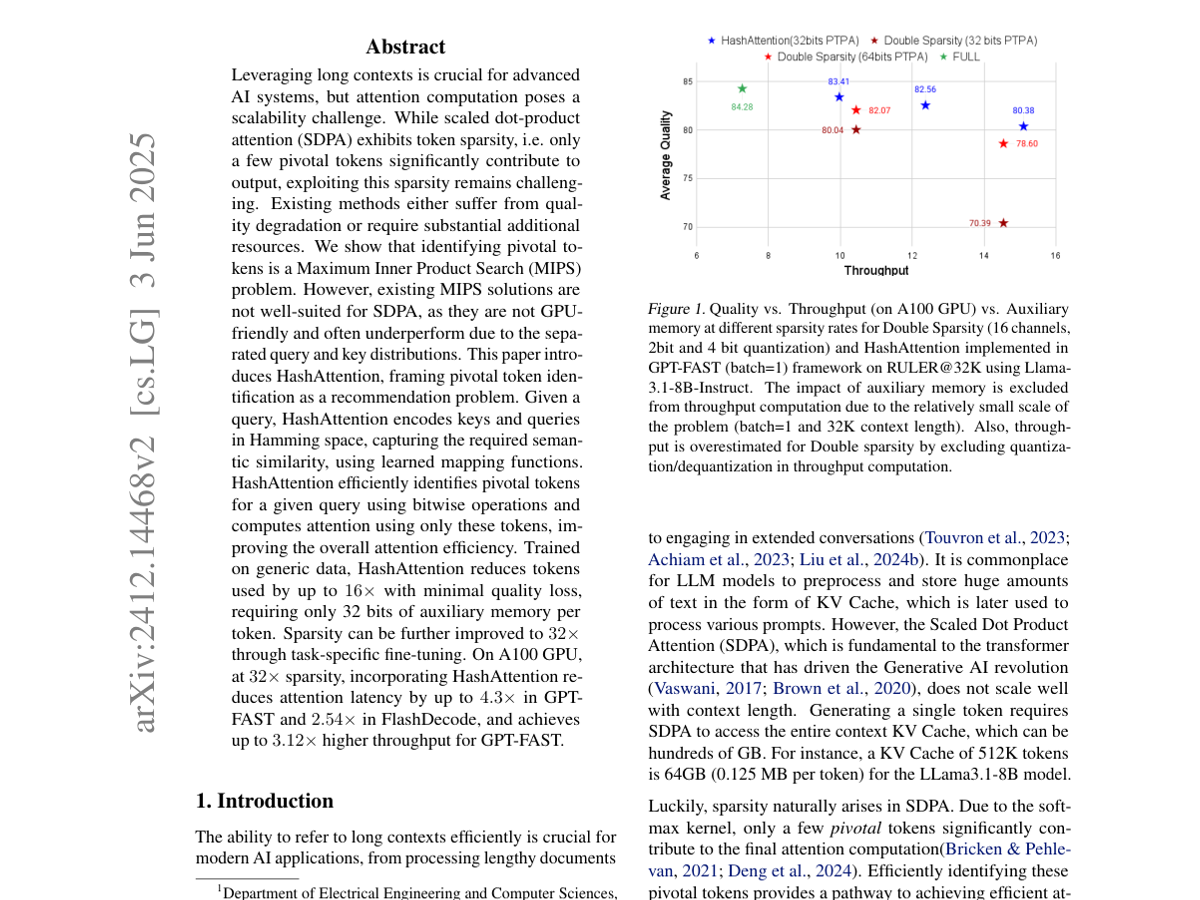

HashAttention frames pivotal-token identification as a recommendation-style semantic sparsity problem and accelerates attention using GPU-friendly hashing and bitwise operations.

HashAttention

Aditya Desai, Shuo Yang, et al.

|

Jan 1, 2025

HashAttention frames pivotal-token identification as a recommendation-style semantic sparsity problem and accelerates attention using GPU-friendly hashing and bitwise operations.