I am a member of Sky Computing Lab and LMSYS. My work spans the full stack of machine learning systems: kernel optimization at the hardware-software boundary, efficient system design for large-scale inference and generation, and text and multimodal algorithms that benefit from those systems advances.

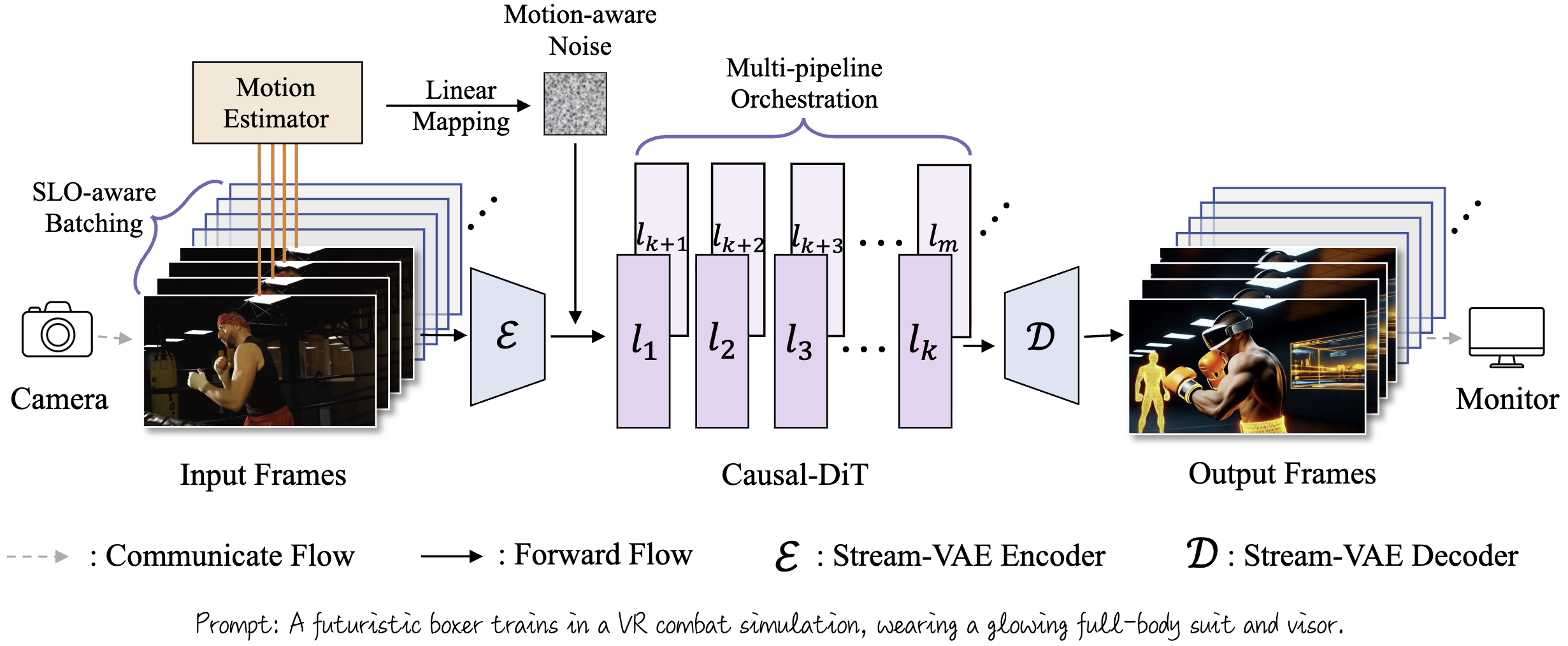

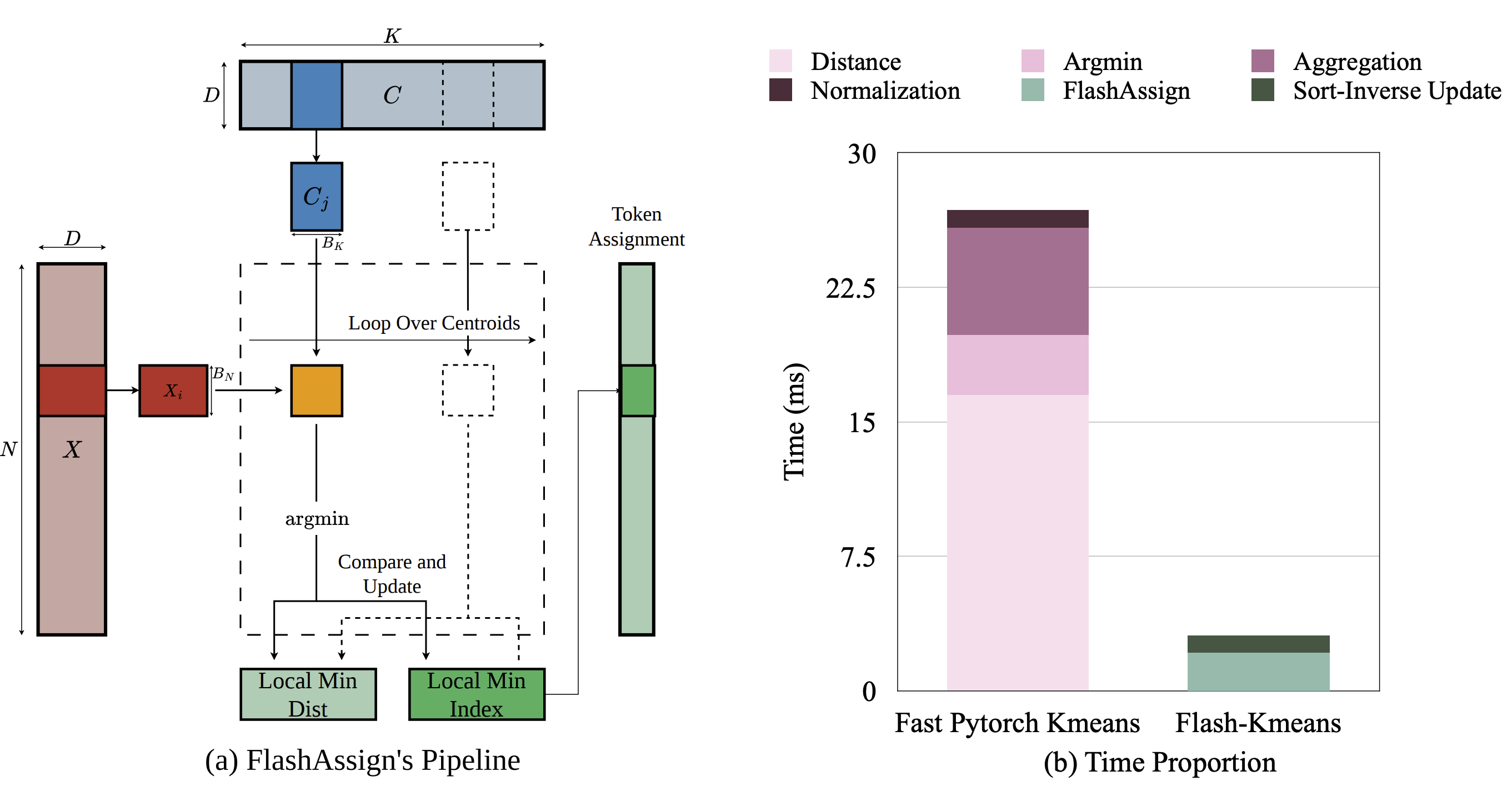

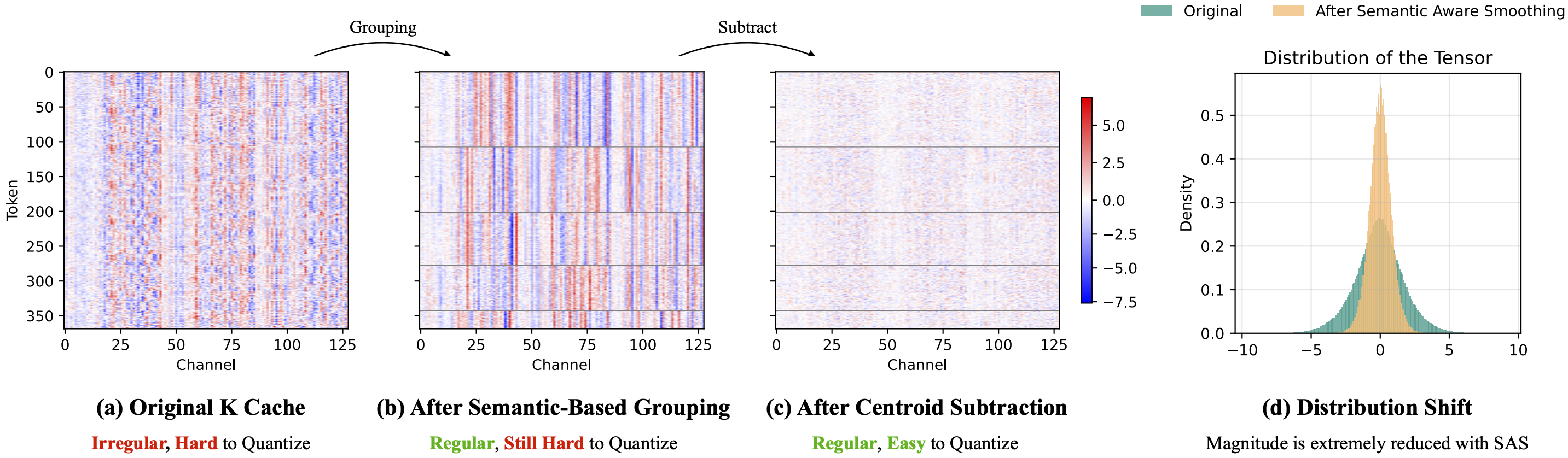

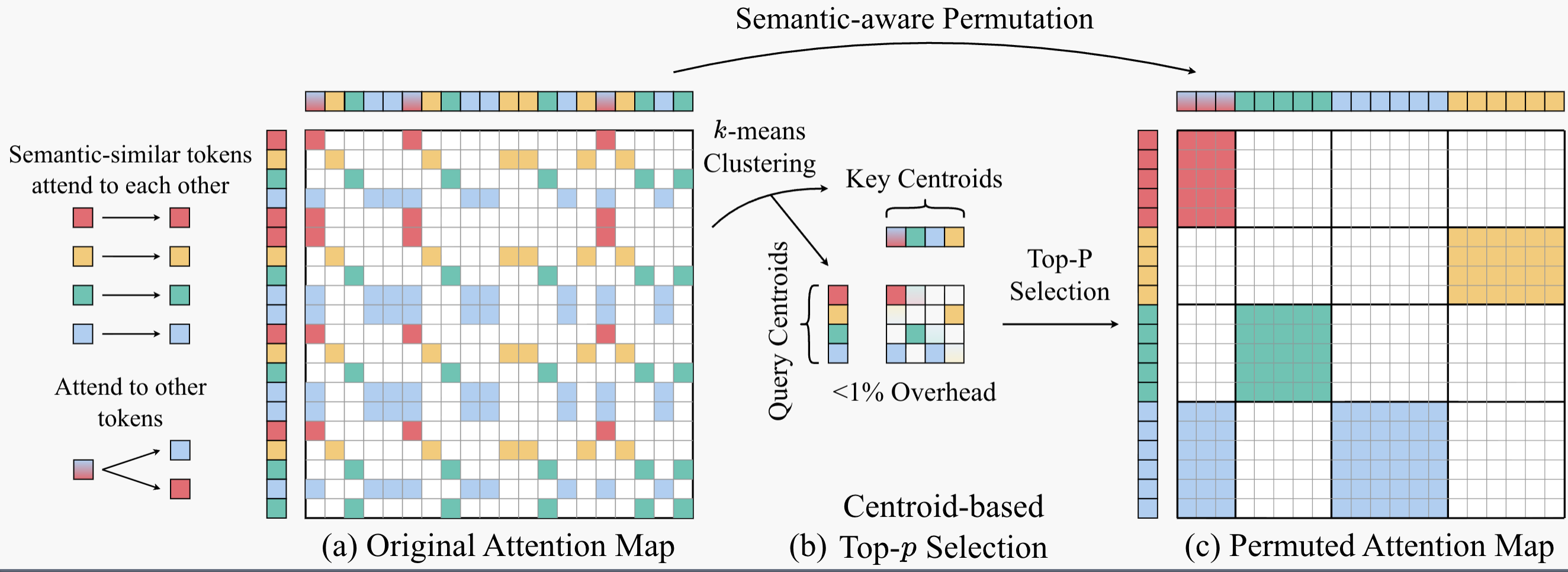

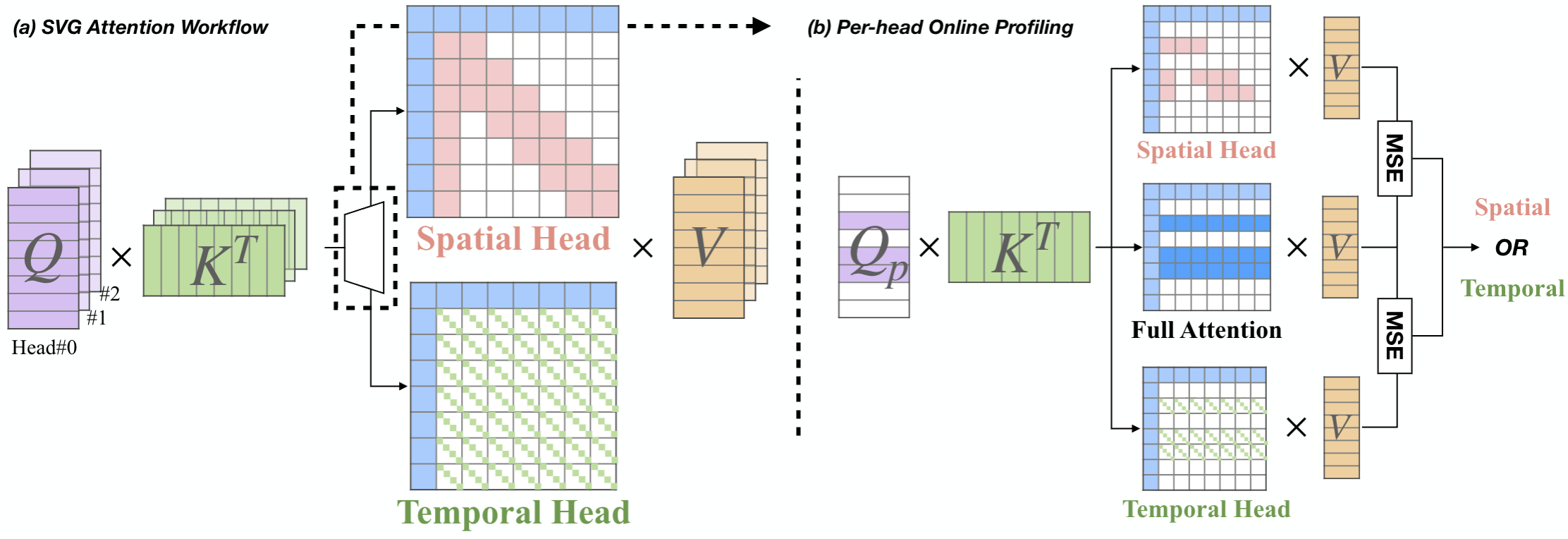

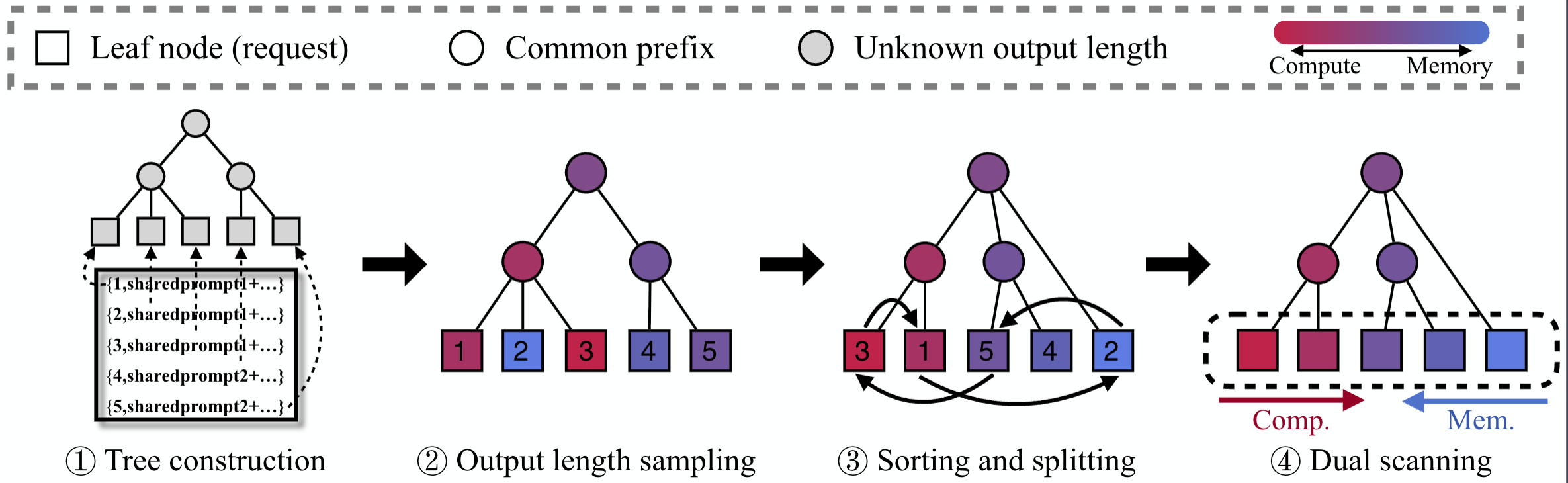

I am especially interested in algorithm-system co-design: building methods that are not only theoretically appealing, but also practical and efficient when deployed at scale. Recent projects include LLM serving, sparse attention, exact GPU K-Means, and efficient video generation.

Recent highlights include the Amazon AI PhD Fellowship, a research scientist internship at Amazon Neuron Science, and an upcoming research internship at Meta.

Previously, I graduated from the ACM Honors Class at Shanghai Jiao Tong University.